If you've been following the innovative work of agricultural entomologist and remote sensing technology researcher Christian Nansen, associate professor of entomology at the University of California, Davis, you can.

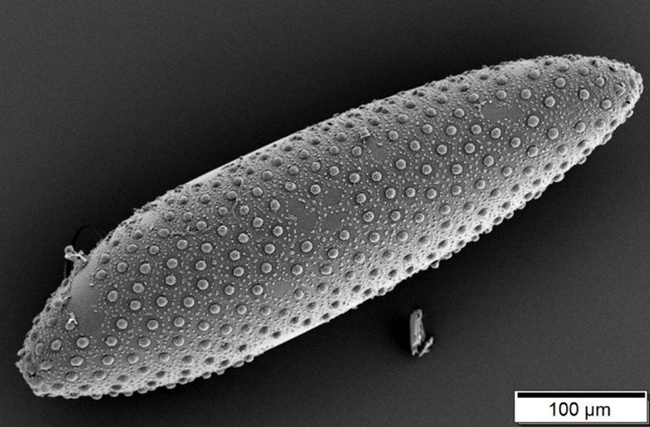

Using Skittles (candy), magnolia leaves, mosquito eggs and sheets of paper, Nansen explored how light penetrates and scatters--and found that how you see an object can depend on what is next to it, under it or behind it.

He published his observations in a recent edition of PLOS ONE, the Public Library of Science's peer-reviewed, open-access journal. He researches the discipline of remote sensing technology, which he describes as “crucial to studying insect behavior and physiology, as well as management of agricultural systems.”

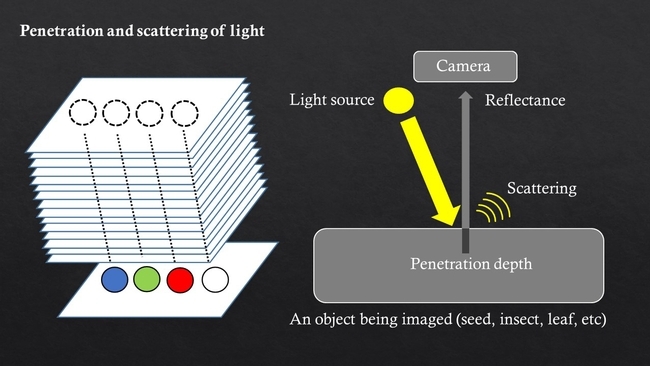

Nansen demonstrated that several factors greatly influence the reflectance data acquired from an object. “The reflected energy from an object--how it looks-- is a complex cocktail of energy being scattered off the object's surface in many directions and of energy penetrating into the object before being reflected,” Nansen pointed out. “Because of scattering of light, the appearance--or more accurately the reflectance profile--of an object depends on what is next to it! And because of penetration, the appearance of an object may also be influenced by what is behind it!”

“The findings are of considerable relevance to research into development of remote sensing technologies, machine vision, and/or optical sorting systems as tools to classify/distinguish insects, seeds, plants, pharmaceutical products, and food items.”

In the PLOS ONE article, titled “Penetration and Scattering—Two Optical Phenomena to Consider When Applying Proximal Remote Sensing Technologies to Object Classifications,” Nansen defines proximal remote sensing as “acquisition and classification of reflectance or transmittance signals with an imaging sensor mounted within a short distance (under 1m and typically much less) from target objects.”

“Even though the objects may look very similar--that is, indistinguishable--to the human eye, there are minute/subtle differences in reflectance in some spectral bands, “ Nansen said, “and these differences can be detected and used to classify objects.”

With this newly published study, Nansen has demonstrated experimentally that imaging conditions need to be carefully controlled and standardized. Otherwise, he said, “penetration and scattering can negatively affect the quality of reflectance data, and therefore, the potential of remote sensing technologies, machine vision, and/or optical sorting systems as tools to classify objects. “

Nansen described the rapidly growing number of studies describing applications of proximal remote sensing as “largely driven by the technology becoming progressively more robust, cost-effective, and also user-friendly.”

“The latter,” he wrote, “means that scientists who come from a wide range of academic backgrounds become involved in applied proximal remote sensing applications without necessarily having the theoretical knowledge to appreciate the complexity and importance of phenomena associated with optical physics; the author of this article falls squarely in that category!”

“Sometimes experimental research unravels limitations and challenges associated with the methods or technologies we use and thought we were so-called experts on,” Nansen commented.

Nansen, who specializes in insect ecology, integrated pest management, and remote sensing, joined the UC Davis faculty in 2014 after holding faculty positions at Texas A&M, Texas Tech and most recently, the University of Western Australia.

Attached Images: